Every AI design framework I have seen this year has the same flaw: it tells you what to do instead of building your team's capacity to figure out what you actually need.

That is not a minor distinction. It's the whole problem.

The market is flooded right now with 90-day transformation plans, certified AI design sprints, and step-by-step workflows — all built around someone else's team, someone else's tools, and someone else's business. Most of them are flawed not because the ideas are bad, but because they treat context as a variable you can ignore.

At Auth0, we did not adopt a playbook. We built a capability — and the path looked different than anything we had read. What created clarity was not the specific tools we chose or the process we ran. It was being honest about where we were before deciding where to go.

What we learned: the tactics are not prescribed. The outcomes are.

The Four Stages of AI-Native Design

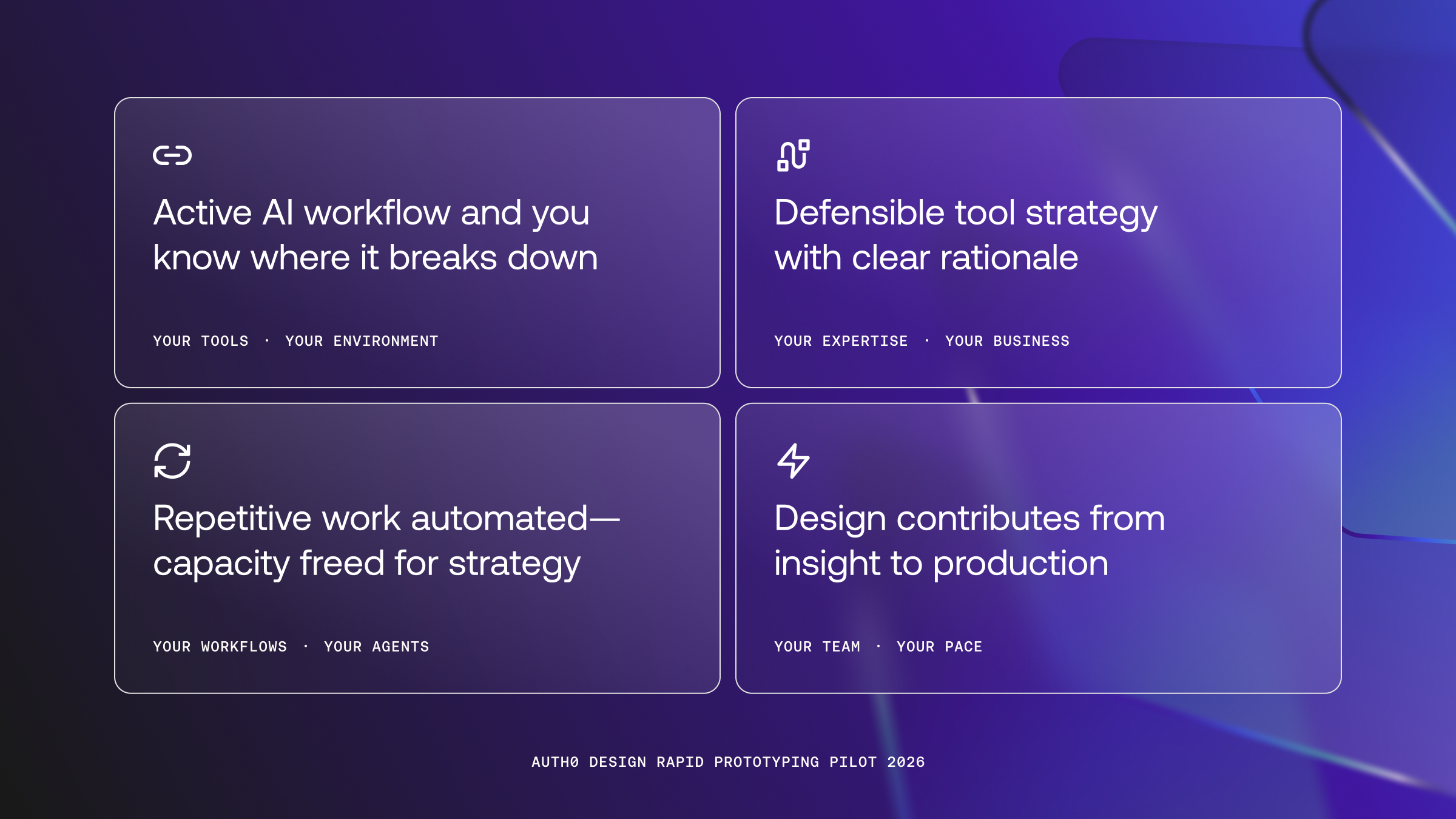

Each stage of AI-native Design has a clear outcome. How your team reaches it depends on your people, your tools, and your business context. That part is yours to figure out — and it should be.

Stage 1: Establish an active AI workflow

An active AI workflow happens when teams are using AI tools to augment their contributions and can map the specific moments where AI reduces friction in their work. This process requires human engagement and that designers provide supervision, validation, and judgement rather than fully delegating tasks.

"Designers are experimenting with AI" is not a milestone. It is a starting condition. The real outcome is a team that has moved from curiosity to practice — that has mapped the specific moments where AI reduces friction and the moments where it does not. At Auth0, this meant creating a low-stakes environment where designers could work with AI tools outside of production pressure, document what worked, and bring the findings back to the team. What that looks like in your org will depend on your risk tolerance, your tooling access, and how much psychological safety already exists on your team. There is no shortcut to finding out.

Outcome: Your team has a working AI workflow and knows exactly where it breaks down.

Stage 2: Develop a defensible tool strategy

The tooling landscape is loud. Every tool claims to solve the problem. Teams that adopt reactively — chasing what is popular rather than what fits — lose time and trust. The question is not which tools are best. It is which tools match your team's current expertise, integrate with your existing stack, and can scale as your practice matures. A principled stack your team can explain is worth more than an optimal one nobody understands. Clarity here creates time to value everywhere else.

Outcome: Your org has made deliberate tool choices with clear rationale.

Stage 3: Build AI infrastructure for design

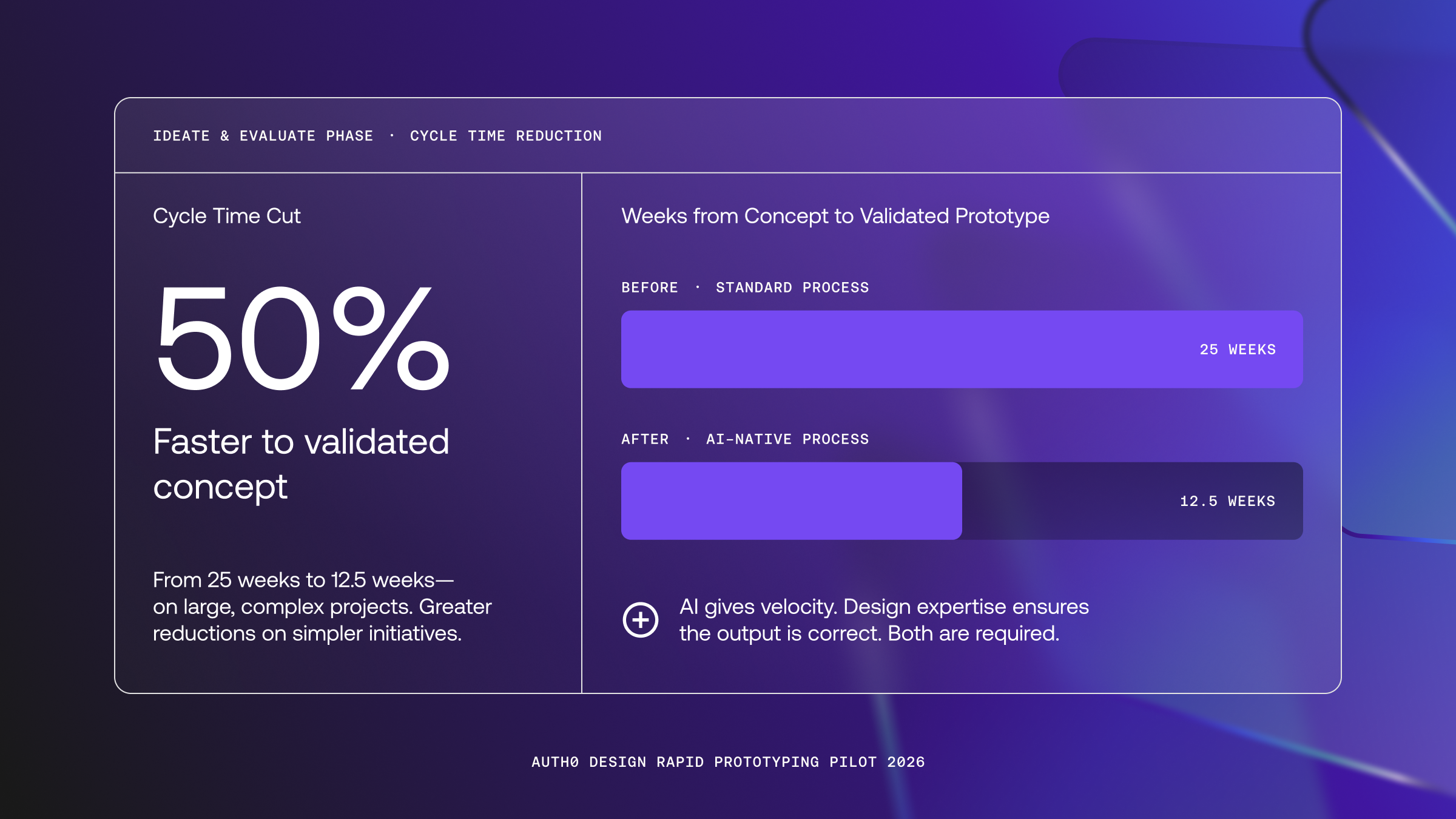

This is where most conversations about AI and design stay shallow. Faster variant generation is noise reduction. The real opportunity is in the structural inefficiencies — the work that consumes design capacity without producing strategic value. User research synthesis, prototype iteration, QA, and validation loops. At Auth0, we ran a two-week rapid prototyping pilot that cut our ideate and evaluate phases from 25 weeks down to 12.5 — a 50% reduction in cycle time. Not because we found the right tools. Because we built the right infrastructure around our team's expertise and let design guide the quality of what came out. The lesson from that pilot: AI makes it easy to generate. It does not make it easy to be right. When design owns that infrastructure, the team stops executing tasks and starts shaping outcomes.

Outcome: Repetitive, time-intensive design work is automated — freeing the team for higher-order contribution.

Stage 4: Redefine where design contributes

This is the uncomfortable one, and it is worth being precise about what it means. It is not about designers replacing engineers. It is about design owning meaningful contributions at every stage of the product lifecycle — not just the hand-off. Ideation, prototyping, validation, quality, metrics, and yes, shipping production-ready code to production PRs. The teams doing this are not skipping engineering — they are pairing with them earlier, more fluidly, and with more shared context. Designers who operate across that arc become indispensable. Designers who stay inside the traditional lane become a bottleneck, no matter how skilled they are within it.

Outcome: Design is a full-cycle contributor — from insight to production.

The Honest Starting Point

Where does your team actually sit across these four stages? Not aspirationally, right now, in 2026.

Most teams I talk to have made progress on Stages 1 and 2. They have workflows. They have tested tools. But they have stalled before Stage 3 because building infrastructure requires investment (in time, in tooling, and in organizational trust) that feels risky to ask for without proof. The pilot data helps. But the ask still has to come from somewhere.

That assessment — of expertise, tooling, and risk tolerance — is the only legitimate foundation. Anyone selling a shortcut past it is selling their formula, not your solution.

The design teams that will matter have not found the right playbook. They have built the judgment to know what their team needs, and the infrastructure to deliver it.

That work starts with honesty, not a framework. To read more about how we retain quality for both human and agentic experiences, read about our Developer Experience Principles.

Frequently Asked Questions

About the author

Micheal Lopez

Vice President, Design & Research (Auth0)

Micheal Lopez spent 20 years designing at the intersection of developer experience and technical platforms. In his current role as Vice President of Design at Auth0, Micheal leads the product design, user research, and design operations functions. He is known for uniting cross-functional teams to deliver cohesive, end-to-end customer experiences that bridge marketing and product. Prior to Auth0, Micheal was Vice President of Brand & Design at Data.ai, and held design leadership roles at Segment and Google. He's currently developing the Design Builder model: a new kind of practitioner who prototypes in code, runs synthetic user tests with AI personas, and ships directly to production. He writes about what AI-native design actually requires, and why most design teams are asking the wrong questions.

View profile